[adrotate banner=”5″]

[metaslider id=249]

The 80 Gbps barrier has finally been broken (and yes we are rounding up) !!!!

Well at least it has been reached by someone other than MikroTik. It’s taken us quite a while to get all the right pieces to push 80 Gbps of traffic through the CC1072 but with the latest round of servers that just got delivered to our lab, we were able to go beyond our previous high water mark of 54 Gbps all the way to just under 80 Gbps. There have been a number of questions about this particular router and what the performance will look like in the real world. While this is still a lab test, we are using non-MikroTik equipment and iperf which is considered an extremely accurate performance measuring tool for TCP and UDP.

Video of the CCR1072-1G-8S+ in action (Turn up your volume to hear the roar of the ESXi servers as they approach 80 Gbps)

How we did it – The Hardware

CCR1072-1G-8S+ – Obviously you can’t have a test of the CCR1072 without one to test on. Our CCR1072-1G-8S+ is a pre-production model so there are some minor differences between it and the units that are shipping right now.

HP DL360-G6 – It’s hard to find a better value in the used server market than the HP DL360 series. This series of server can be found on ebay for under $500 USD in general and makes a great ESXi host for development and testing work.

Intel x520-DA2 10 Gbps PCI-E NIC – This NIC was definitely not the cheapest 10 gig NIC on the market, but after some research, we found it was one of the most compatible and stable across a wide variety of x86 hardware. The only downside to using this particular NIC is the requirement to use INTEL branded SPF+ modules. We tried several types of generics and after reading some forums posts, we were able to figure out that it only takes a few different types of INTEL SFP+ modules. This didn’t impact performance in any way but doubled the cost of the SFPs required.

How we did it – The Software

RouterOS – We tried several thoughought the testing cycle, but settled on 6.30.4 bugfix.

iperf3 – If you need to generate traffic for a load test, this is probably one of the best programs to use.

VM Ware ESXi 6.0 – There were certainly a fair amount of hypervisors to choose from, and we even considered a Docker deployment for the performance advantages but in the end, VM Ware was the easiest to deploy and manage since we needed some flexibility in the vswitch and 9000 MTU.

CentOS 6.6 Virtual Machines – We chose CentOS as the base OS for TCP throughput testing because it supports VM Ware tools and the VMXNET3 paravirtualized NIC. It is not possible to get a VM to 10 Gbps of throughput without using a paravirtualized NIC so this limits the range of Linux distros to mainstream types like Ubuntu and CentOS. Also, we tend to use CentOS more than the other flavors of linux and so it allowed us to get the VMs ready quickly to generate traffic.

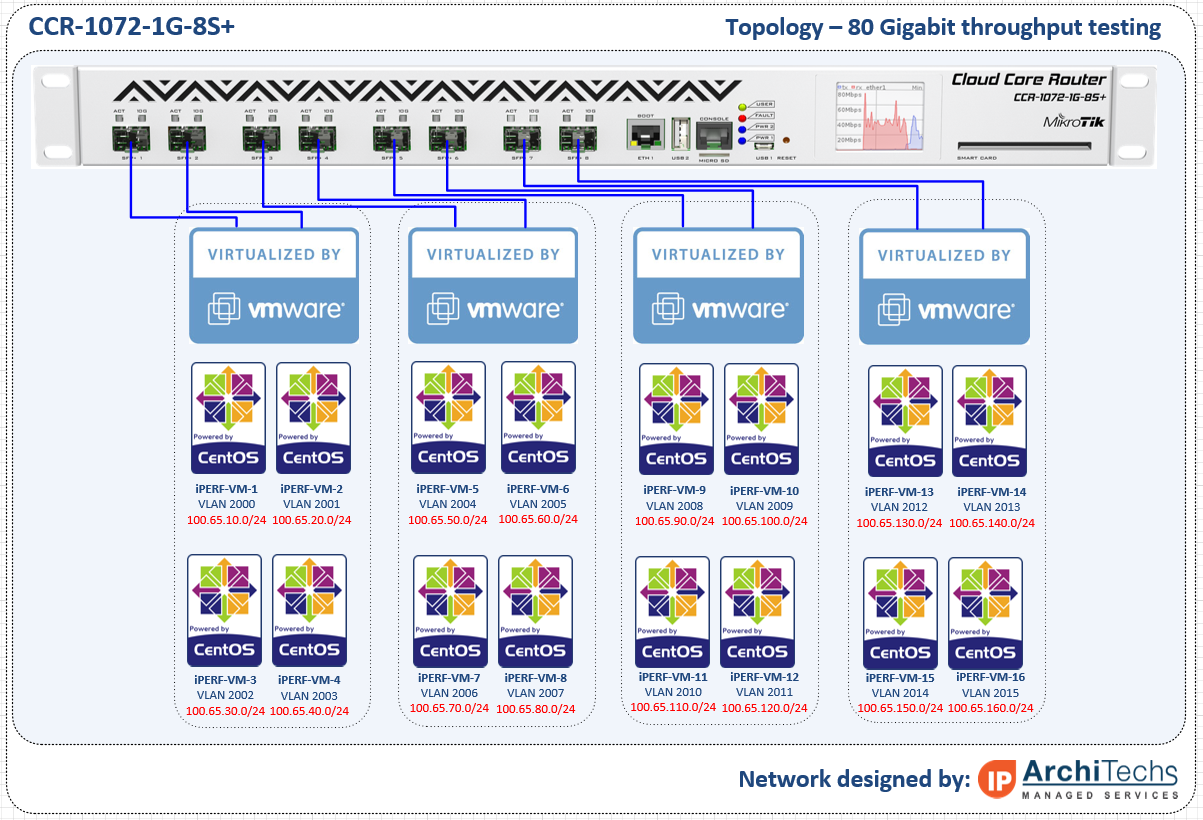

Network Topology for the test – We essentially had to put two VLANs on every physical interface so that the link could be fully loaded by using the 1072 to route between VLANs. A VM was built on each VLAN and tagged in the vswitch for simplicity. In earlier tests, we tried to use LACP between ESXi and the 1072 but had problems loading the links fully with the hashing algorithms we had available.

RouterOS Config for the test

www.iparchitechs.com/iparchitechs.com-ccr1072-80gig-test-config.rsc

Conclusions, challenges and what we learned

The CCR1072-1G-8S+ is an extremely powerful router and had little difficulty in reaching full throughput once we were able to muster enough resources to load all of the links. As RouterOS continues to develop and moves into version 7, this router has the potential to be extremely disruptive to mainstream network vendors. At $3,000.00 USD list price, this router is ab absolute steal for the performance, 1U footprint and power consumption. Look for more and more of these to show up in data centers, ISPs and enterprises.

Challenges

There have been a number of things that we have had to work through to get to 80 Gbps but we finally got there.

- VMWARE ESXi – LACP Hashing – Initially we built LACP channels between the ESXi hosts and the 1072 expecting to load the links by using multiple source and destination IPs but we ran into issues with traffic getting stuck on one side of the LACP channel and had to move to independent links.

- Paravirtualized NIC – The VM guest OS must support a paravirtualized NIC like VMXNET3 to reach 10 Gbps speeds.

- CPU Resources – TCP consumes an enormous amount of CPU cycles and we were only able to get 27 Gbps per ESXi host (we had two) and 54 Gbps total

- TCP Offload – Most NICs these days allow for offloading of TCP segmentation by hardware in the NIC rather than the CPU, we were never able to get this working properly in VM WARE to reduce the load on the hypervisor host CPUs.

- MTU – While the CCR1072 supports 10,226 bytes max MTU, VMWARE vswitch only supports 9000 so we lost some potential throughput by having to lower the MTU by more than 10%

What we learned

- MTU – In order for the CCR1072-1G-8S+ (and really any MikroTik) to reach maximum throughput, the highest L2/L3 MTU (we used 9000) must be used on:

- The CCR

- The Guest OS

- The Hypervisor VSWITCH

- Hypervisor Throughput – Although virtualization has come a long way, there is still a fair amount of performance lost especially in the network stack when putting a software layer between the Guest OS and the Hardware NIC.

- CPU Utilization with no firewall rules or queues is very low. We haven’t had a chance to test with queues or firewall rules but CPU utilization never went above 10% across all cores.

- TCP tuning for 10 Gbps in the Guest OS is very important – see this link: https://fasterdata.es.net/host-tuning/linux/

Please comment and let us know what CCR1072-1G-8S+ test you would like to see next!!!

Good info for many ISPs, but we are still waiting for RouterOS 7.

Thank you for doing this lab and sharing with us, it would be awesome if you could showcase how much can be done with the remaining cpu power (maybe filtering, BGP, etc … at 20/40/80Gbps)

Thanks for doing these tests, and sharing the data.

I was thinking about using the machine as VPN concentrator, how many 500Mb tunnels would this machine terminate in IPsec.

We have designed solutions that scale beyond 100,000 VPN tunnels across multiple CCRs. From a tunnel standpoint, you can easily get 10,000+ connections per CCR using OpenVPN, after that it’s just a matter scaling as many CCRs and switches as you need to get the bandwidth at the physical layer. As far as IPSEC goes, you can get about 7.5 Gbps of hardware acceleration using a CCR.

Since you’re running Linux you can do away with VMWare to make this easier on yourself and take advantage of TCP offload.

What you want is network namespaces. Namespaces gives you the ability to run a process with its own routing table and interfaces, without needing full virtualisation with its own filesystem, memory allocation, etc. Have a look at Scott Lowe’s excellent blog post.

Note I don’t use ifconfig – see this serverfault post.

An example (must be root):

(Example assumes you put your router on 192.168.200.2/24 on VLAN 200 and 192.168.201.2/24 on VLAN 201)

Creating two VLANs on a physical interface (e.g. eth0.200, eth0.201)

Creating a network namespace

Binding one VLAN to a namespace

Bringing the VLAN up, giving the VLAN an IP address, and giving the namespace a default route

Running iperf server in the namespace

Then you can set up your Linux routing normally:

And finally run iperf:

Cheers,

Tim

Just curious why you went through the extra headache, hassle, and overhead of virtualization? Why not just run linux on the bare metal as Tim mentioned?

Mostly because our lab is used to virtualize different vendors to plan/validate network designs for our day to day work. Just having Linux servers for load testing isn’t as practical as having a VM Ware Hypervisor that we can segment with a Vswitch and real switch. I wish we had enough time to put into development on the server side but most of our work is all Network Engineering/Architecture so ESXi makes sense for us because we can rapidly spin up just about any environment we are working on. Thanks for the feedback!

You really need to test throughput with minimum packet size (64 bytes) and full BGP table loaded. Otherwise we have no idea if this will survive in an ISP setting during a DDoS attack.

I realize that your test setup might have trouble generating this load, but even just a partial “we were able to generate 10 Gbit/s of small packets and the router did fine with that” is better than nothing. Right now we have a test with MTU 9000 at 80 Gbit/s. That is approximately 1 million packets per second. Or just 0.5 Gbit/s with 64 bytes packets. If that is all it can do, any kid with a 1 gig FTTH connection can kill it with flood ping! We need numbers…

All fair points and we plan to do testing with smaller packet sizes, but remember there are many different use cases for routers and ISP edge/peering routers are but one use case. Enterprises and Data Centers frequently use 9000 MTU on core network segments and especially on storage networks which are the one of the biggest growth areas in network engineering.

Hi,

Is there any updates with that kind of test?

Im really interested,.how this rojter can handle ddos (udp,.syn flood etc).

+1

I’m also interested in a test showcasing edge/peering scenario and PPS throughput.

Hi, can you make a tests with smaller packets, for example 20-30 bytes?

Great job! I was looking for a best way to test my 2 pc CCR1036-12G-4S.

I’m currently reading Benchmarking Methodology for Network Interconnect Devices (RFC2544) witch is the official testing mechanism according MikroTik.

It’s great that you share your experience.

Great reviews, I’m really enjoying playing with the CCR1072 myself but haven’t had time to lab it up, real world playing has to do !!!

Re Intel X520, it is possible to use unsupported, eg Mikrotik SFPs,

echo “options ixgbe allow_unsupported_sfp=1,1” > /etc/modprobe.d/ixgbe-options.conf

depmod -a

update-initramfs -u

I can’t find original link that led me to it but it does work.

Also, have you tried ProxMox? I’ve moved away from ESXi completely, it’s got brilliant features and way more options…

Hello,

Great post, thank you very much!!!

I’m building network with similar setup, could you please tell me what Intel SFP+ did you use for Intel x520-DA2 10 Gbps PCI-E NIC?

Regards,

Igor

Hello Kevin.

Can you share the conf of the MT ? The link is dead.

You were in fastpath?